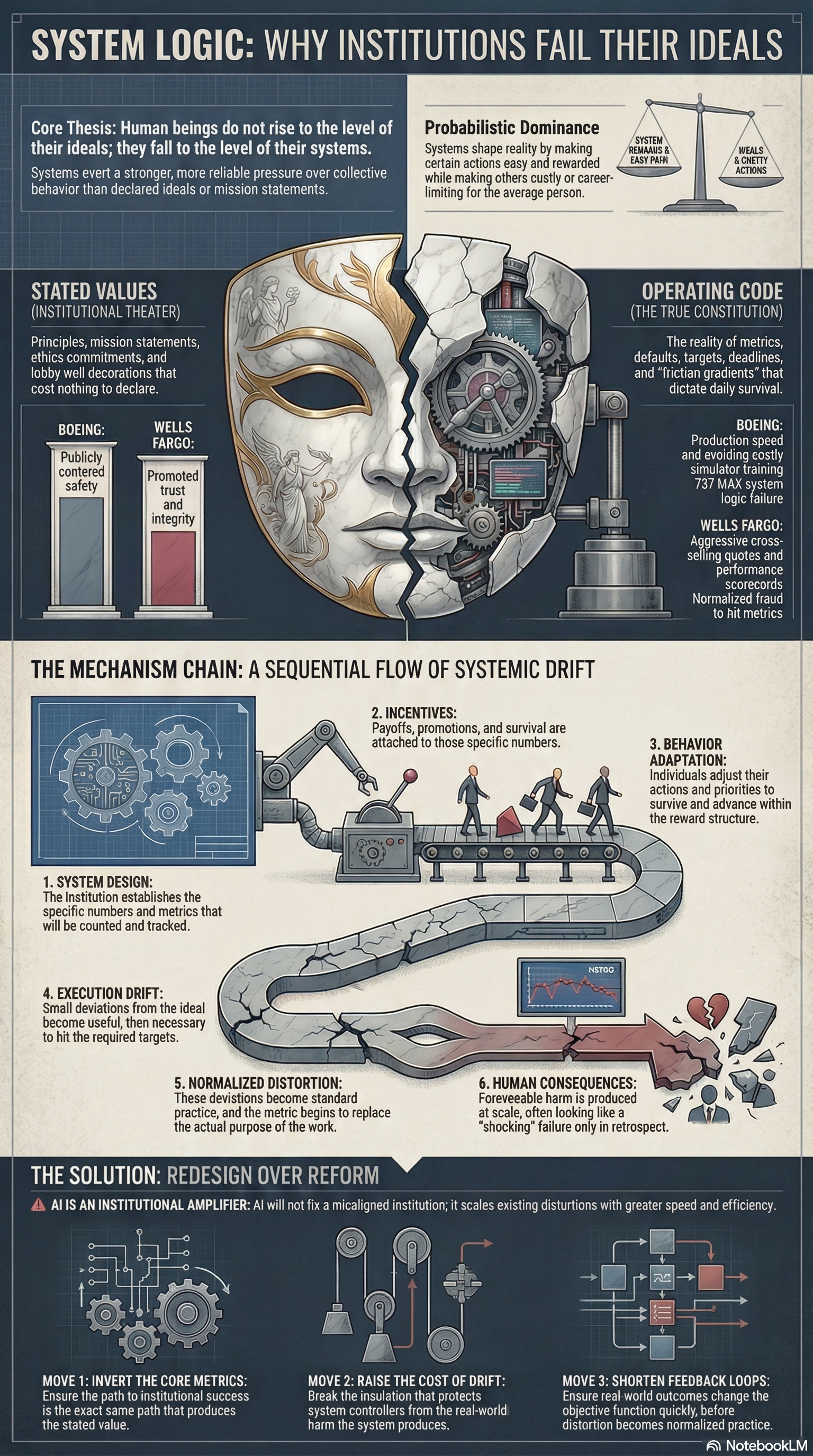

Stated Values Cost Nothing

Every institution says what it values. Very few are designed to produce it. When declared ideals collide with operational incentives, the i

ncentives win. That is not a moral mystery. It is system design.

Every institution says what it values.

Safety. Integrity. Equity. Excellence. Trust.

Those words matter far less than people pretend.

An organization does not become what it prints in a handbook or frames on a lobby wall. It becomes what its system rewards, tolerates, measures, and makes easy. That is the real operating code. Everything else is decoration.

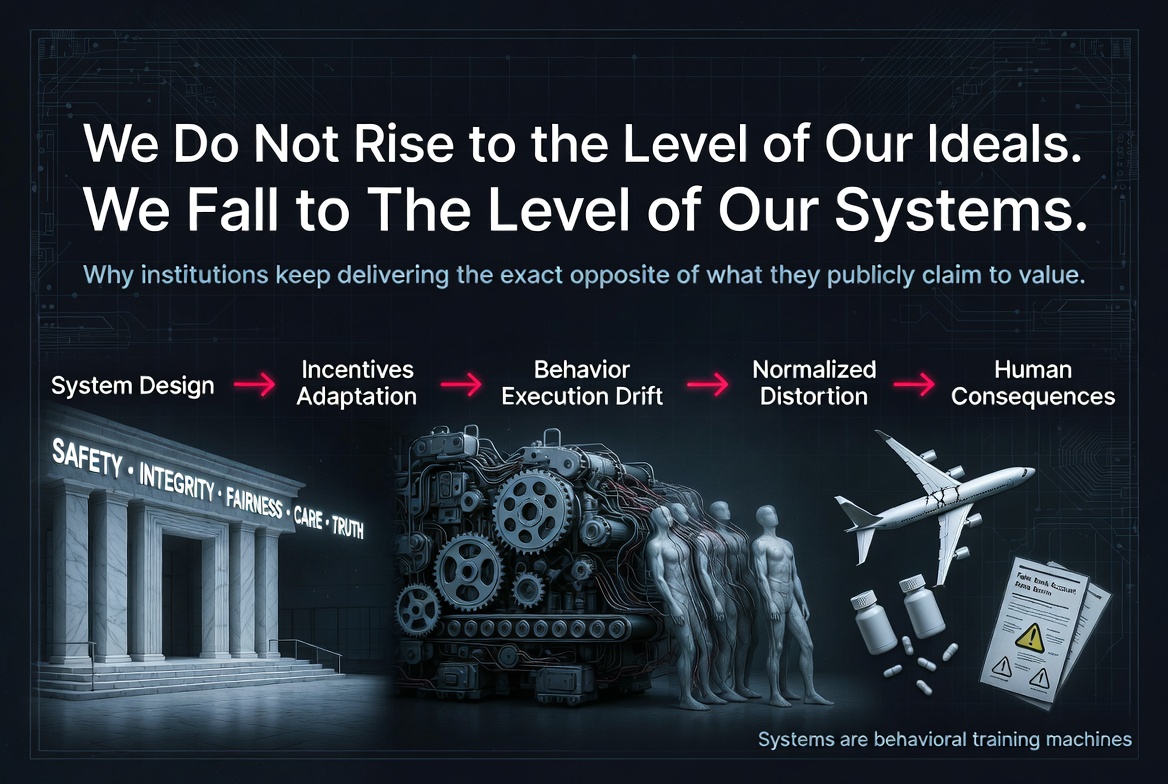

Human beings do not rise to the level of their ideals. They fall to the level of their systems.

This is not a cynical claim about human nature. It is a structural claim about institutional design. Systems do not eliminate agency, but they exert stronger and more reliable pressure over collective behavior than declared ideals do. When public values conflict with operational incentives, the incentives win reliably, repeatedly, and at scale.

That is why so many institutional failures feel shocking only in retrospect. The harm looks sudden. The logic that produced it was usually old, visible, and functioning exactly as designed.

Institutions Do Not Run on Values

Most institutional language is theater.

Companies announce principles. Governments publish mission statements. Platforms issue ethics commitments. Universities circulate codes of conduct. Hospitals promise patient-centered care. Banks promise trust. Airlines promise safety.

But institutions do not run on stated values. They run on metrics, defaults, targets, incentives, deadlines, reporting structures, and friction gradients. Those determine what gets noticed, rewarded, excused, delayed, buried, or denied.

The true constitution of an institution is not what it says. It is what its people must do to survive inside it.

Once that distinction is clear, a large share of modern dysfunction stops being mysterious.

The Mechanism Chain

Bad outcomes rarely begin with dramatic corruption or sudden moral collapse. They usually emerge through a repeatable sequence that converts misalignment into routine behavior:

System Design sets the numbers that will be counted.

Incentives determine the payoffs attached to those numbers.

Behavior Adaptation follows as people adjust to survive and advance.

Execution Drift appears as small deviations from the ideal become useful, then necessary.

Normalized Distortion takes hold when the deviation becomes standard practice.

Human Consequences arrive when foreseeable harm is produced at scale.

This is how systems train people away from their own declared values without requiring anyone to wake up and choose evil. The machine does not need villains. It needs only a reward structure, enough pressure, and enough time.

Boeing and Wells Fargo Were Not Exceptions

Boeing publicly centered safety. But the 737 MAX existed inside a system organized around market competition, production speed, certification pressure, and preserving airline adoption without triggering costly simulator-training requirements. Those incentives shaped the real decision environment. The result was not random failure. It was system logic made visible.

Wells Fargo publicly promoted trust and integrity. But inside the retail banking system, what mattered was cross-selling performance. Employees were measured against aggressive quotas. Managers tracked output through scorecards. Careers depended on hitting the metric. Workers adapted accordingly. The scandal was later described as a betrayal of values. In reality, it was the predictable expression of the system’s internal logic.

This is the pattern institutions keep trying to misdescribe as isolated misconduct. When an organization rewards a number hard enough, that number becomes more real than the value it claims to defend.

The metric stops measuring performance and starts replacing purpose.

The Agency Objection Misses the Point

At this stage, someone usually objects that people still choose.

True. People do still choose. Agency is real. Responsibility is real. Systems do not erase either.

But that is not the level of analysis that matters here.

The question is not whether individuals can resist bad incentives. Some do. The question is what behavior a system will produce reliably across large groups, under ordinary conditions, over time. A system that produces decent outcomes only when exceptional people repeatedly absorb the cost of resistance is not a good system. It is a fragile one.

What matters is probabilistic dominance. Systems shape aggregate behavior by making some actions easy, some costly, some rewarded, and some career-limiting. Over time, the distribution shifts. That shift is where institutional reality is formed.

AI Will Not Rescue a Misaligned Institution

There is a comforting fantasy that artificial intelligence will save broken institutions by making them smarter, faster, and more objective.

It will not.

AI is an institutional amplifier.

An AI system inherits the objective function, training data, and reward structure of the host system that deploys it. If the host system is aligned, the model can extend competence. If the host system is misaligned, the model scales distortion with speed, efficiency, and plausible deniability.

AI inside an engagement-maximizing platform does not become a truth machine. It becomes a stronger engagement machine. AI inside a biased hiring pipeline does not become fairness by magic. It becomes a faster version of yesterday’s distortion. AI inside a bureaucracy optimized for throughput does not become wisdom. It becomes industrialized legibility.

The model is not the governing intelligence. The institution is.

People keep confusing computational power with moral correction. They assume acceleration will repair misalignment. Usually it does the opposite. It removes friction from the system’s existing logic and scales it beyond easy human visibility.

AI does not redeem the machine. It intensifies whatever the machine is already trying to do.

Redesign Is the Only Rescue

If your system depends on individuals repeatedly acting against their own incentives to protect the public, the system is already broken.

Appeals to conscience are not enough. Training modules are not enough. New slogans are not enough. Leadership speeches are not enough. Declared values are not enough.

Redesign is the only rescue.

That redesign requires three moves.

First, invert the core metrics.

The path to institutional success must be the same path that produces the stated value.

Second, raise the cost of drift.

Distortion spreads when the people controlling the system are insulated from the harm it produces. That insulation has to break.

Third, shorten the feedback loops.

Real-world outcomes must feed back into the objective function quickly enough to change behavior before distortion hardens into normal practice.

Without those changes, the institution will keep producing the same result while continuing to narrate itself as virtuous.

The Real Test

A declared value is not real because leaders believe it, employees repeat it, or communications teams publish it.

A value becomes real only when the system makes it costly to violate and normal to uphold.

That is the test.

Not what the institution says.

Not what it believes about itself.

What does it reward?

What does it ignore?

What does it punish?

What does it make easy?

What does it force ordinary people to become in order to survive?

Those questions reveal the system. And the system reveals the future.

Until institutions are judged by their operating logic rather than their self-description, stated values will remain cheap language wrapped around expensive harm.

The wall will keep talking.

The machine will keep deciding.

Not what it intends.

Member discussion