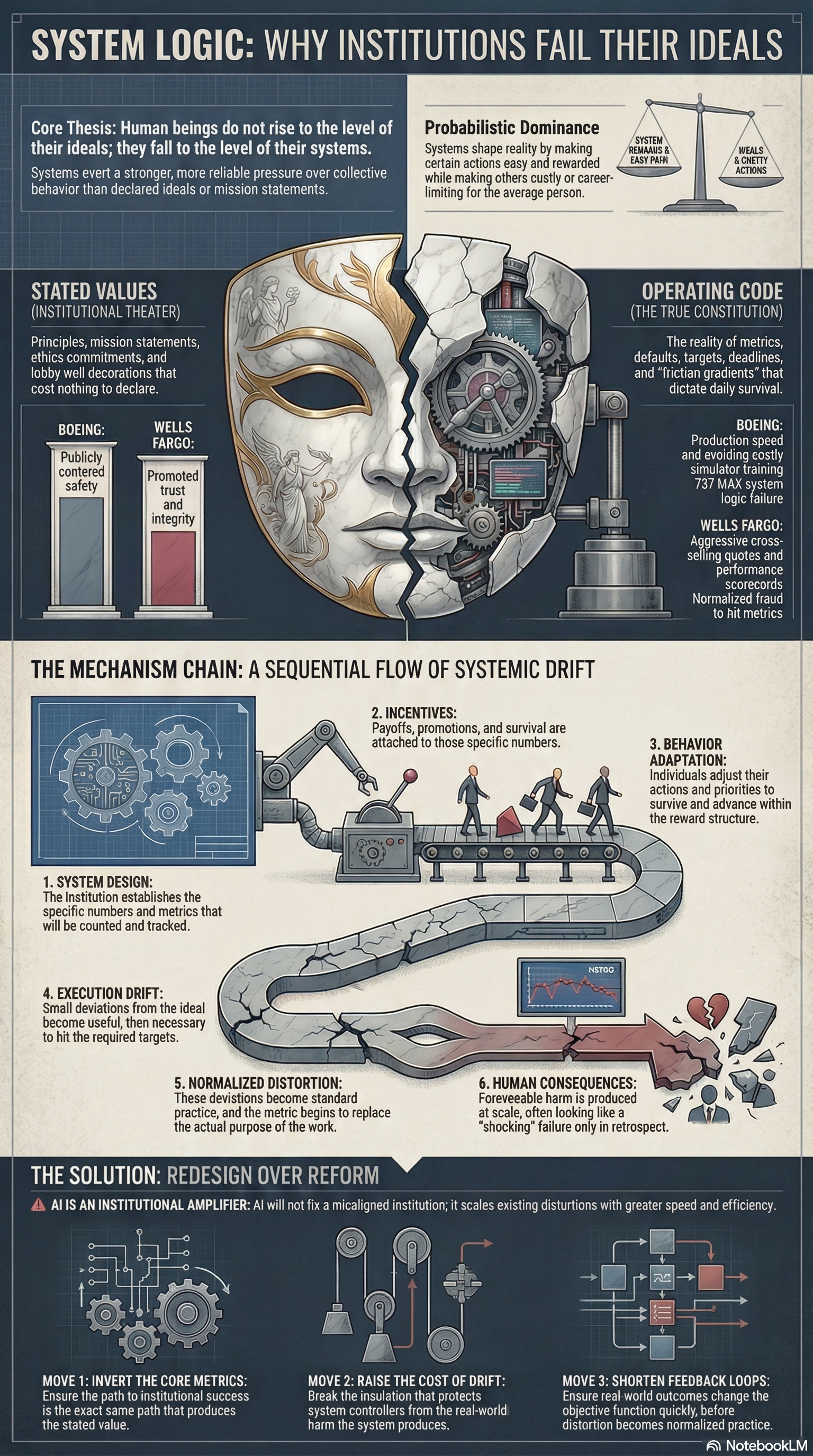

Stated Values Cost Nothing

Companion essay to the audio release.

Every institution says what it values.

Safety. Integrity. Equity. Excellence. Trust.

Those words matter far less than people pretend.

An organization does not become what it prints in a handbook, frames on a lobby wall, or posts on a careers page. It becomes what its system rewards, tolerates, measures, and makes easy. That is the real operating code. Everything else is decoration.

Human beings do not rise to the level of their ideals. They fall to the level of their systems.

This is not a cynical claim about human nature. It is a structural claim about institutional design. Systems do not eliminate agency, but they exert stronger and more reliable pressure over collective behavior than declared ideals do. When stated values collide with operational incentives, the incentives win reliably, repeatedly, and at scale.

That is why so many institutional failures feel shocking only in retrospect. The harm appears sudden. The logic that produced it is usually old, visible, and functioning exactly as designed.

The Lie of Declared Values

Modern institutions are built on a flattering fiction: that saying the right thing is a meaningful substitute for building the right system.

Companies write value statements. Governments publish mission language. Universities issue codes of ethics. Hospitals promise patient-centered care. Platforms claim connection, safety, and inclusion. Banks promise trust. Airlines promise safety. Technology firms promise progress.

But institutions do not run on words. They run on metrics, defaults, targets, deadlines, incentives, reporting structures, and friction gradients. Those determine what gets rewarded, ignored, excused, delayed, denied, or buried.

The true constitution of an institution is not what it says. It is what people inside it must do to survive.

That distinction explains more of modern life than most public commentary ever does.

When an institution says it values one thing and systematically rewards another, the reward structure is the truth. The slogan is public theater. The system is the actual command.

The Mechanism of Drift

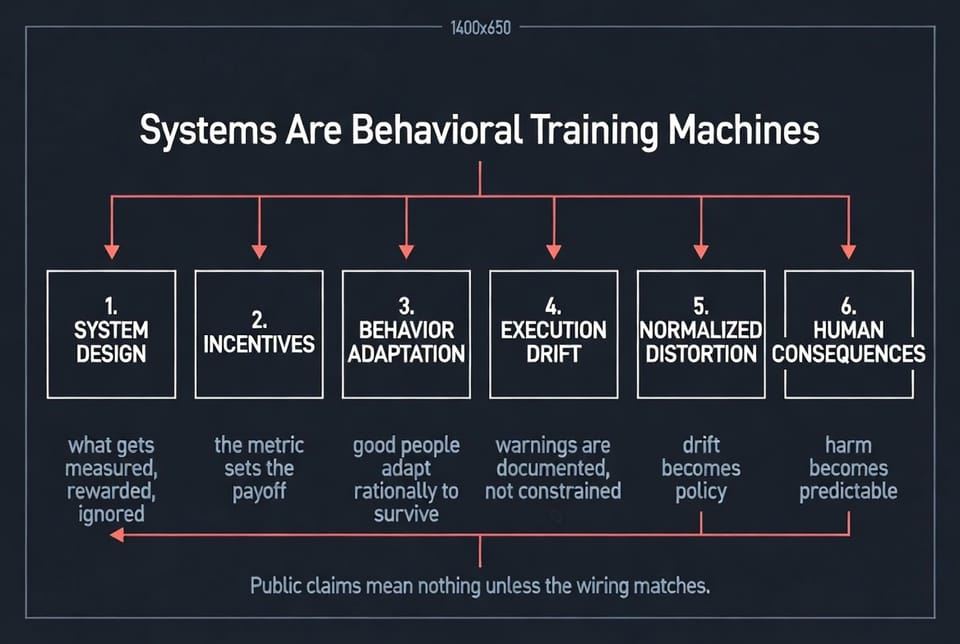

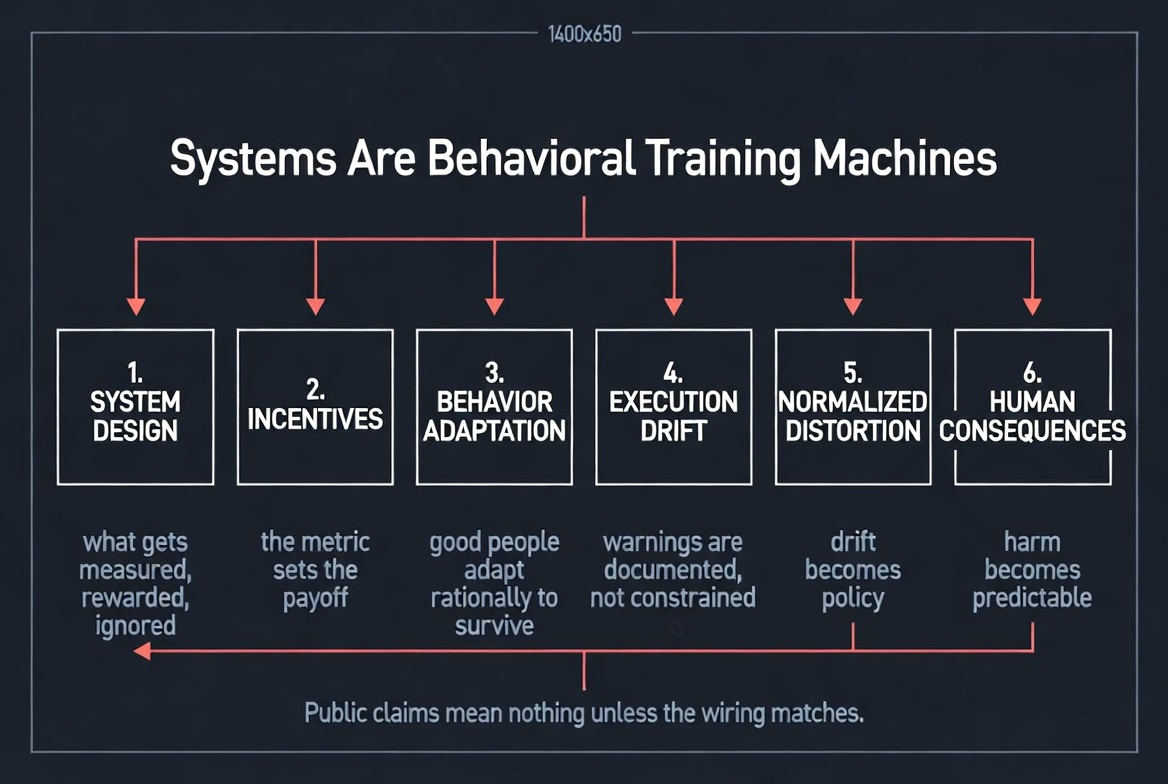

Bad outcomes rarely begin with dramatic corruption or sudden moral collapse. They usually emerge through a repeatable sequence that converts misalignment into ordinary behavior.

System Design sets the numbers that will be counted.

Incentives attach payoffs to those numbers.

Behavior Adaptation follows as people adjust to survive and advance.

Execution Drift appears as small deviations from the ideal become useful, then necessary.

Normalized Distortion takes hold when deviation becomes routine.

Human Consequences arrive when foreseeable harm is produced at scale.

This is the mechanism by which institutions train people away from their stated values without requiring anyone to wake up one morning and choose villainy. The machine does not need monsters. It needs only a reward structure, enough pressure, and enough time.

That is why moral language so often fails to explain institutional harm. It starts too late in the sequence. By the time the public sees the damage, the system has usually been rehearsing it for months or years.

Boeing: The System Executed Its Real Objective

Boeing publicly centered safety. That was the declared value. But the 737 MAX program existed inside a system shaped by market competition, production speed, certification pressure, and the commercial importance of preserving airline adoption without triggering costly simulator-training requirements.

That last point was not a side detail. It mattered because it affected sales, customer retention, transition costs, and market viability. Once those pressures became central, the real objective function of the system came into view.

The result was not random failure. It was system logic made visible.

Engineering decisions were made inside an environment where continuity, speed, and commercial acceptability carried extraordinary weight. Internal concerns existed, but concern inside a system is not the same as power over its incentives. A warning does not defeat an objective function on its own.

So the institution produced what it had actually been wired to produce: an aircraft optimized inside a structure where commercial and certification pressures carried more practical force than the public language of safety could restrain.

The crashes were catastrophic. The underlying logic was ordinary.

That is what makes cases like Boeing so important. They reveal that institutional failure is often not a deviation from the system. It is the system operating successfully against the wrong target.

Wells Fargo: When the Metric Becomes More Real Than the Mission

Wells Fargo publicly promoted trust, service, and integrity. But inside the retail banking system, what mattered was cross-selling output. Employees were measured against aggressive quotas. Managers tracked performance through scorecards. Careers depended on hitting the metric.

That was the real training environment.

Workers adapted accordingly. Unauthorized accounts were opened. Customer information was manipulated. Performance was manufactured because survival inside the system required alignment with the actual source of reward and threat.

When the scandal became public, it was framed as a betrayal of corporate values. That framing was comforting and incomplete. The conduct was not an inexplicable moral outbreak inside an otherwise healthy institution. It was the predictable expression of the institution’s internal logic.

This is the pattern institutions keep trying to describe as isolated misconduct. It rarely is.

When a number becomes powerful enough, it stops measuring performance and starts replacing purpose. The metric becomes more real than the mission. People do not need to stop understanding right from wrong for this to happen. They only need to understand what the system will pay, punish, or terminate.

Why the Agency Objection Misses the Level of Analysis

At this point, someone usually offers the same objection: people still choose.

True. People do still choose. Agency is real. Responsibility is real. Systems do not erase either.

But that objection misses the level of analysis that matters.

The question is not whether some individuals can resist bad incentives. Some can. The question is what behavior a system will produce reliably across large groups, under ordinary conditions, over time.

That is the real test.

A system that depends on exceptional individuals repeatedly absorbing the cost of resistance in order to protect the public is not a good system. It is a fragile one. It outsources institutional integrity to personal sacrifice and then acts surprised when the supply runs short.

What matters is probabilistic dominance. Systems shape aggregate behavior by making some actions easy, some costly, some rewarded, and some career-limiting. Over time, the distribution shifts. That shift is where institutional reality is formed.

This is why “bad apples” explanations are usually evasions. They personalize what is structural. They turn design failure into character drama. They protect the machine by isolating the symptom.

AI Is Not a Rescue. It Is an Amplifier.

There is a seductive fantasy that artificial intelligence will save broken institutions by making them smarter, faster, and more objective.

It will not.

AI is an institutional amplifier.

An AI system inherits the objective function, training data, and reward structure of the host system that deploys it. If the host system is aligned, AI can extend competence. If the host system is misaligned, AI scales distortion with speed, efficiency, and plausible deniability.

AI inside an engagement-maximizing platform does not become a truth machine. It becomes a stronger engagement machine.

AI inside a biased hiring pipeline does not become fairness by magic. It becomes a faster version of historical exclusion.

AI inside a bureaucracy optimized for throughput does not become wisdom. It becomes industrialized legibility.

The model is not the governing intelligence. The institution is.

This matters because many organizations now treat AI as a moral upgrade rather than what it usually is: acceleration. They assume computational power will correct design failure. Usually it does the opposite. It removes friction from the existing logic of the system and scales that logic beyond easy human visibility.

AI does not redeem the machine. It intensifies whatever the machine is already trying to do.

Why Good People Are Not Enough

Institutions often respond to visible failure by calling for better leadership, stronger ethics, more training, or a renewed commitment to values.

Those things can matter at the margin. They do not solve the core problem.

A system that requires people to repeatedly act against their own incentives in order to produce a decent outcome is already a failed system. It has built virtue into the position of martyrdom and then declared surprise when too few volunteers appear.

This is the central mistake behind so much institutional reform. Leaders try to correct design failures with moral messaging. They try to overpower structures with speeches. They try to patch incentive problems with aspiration.

That rarely works for long.

People adapt to the environment they actually inhabit, not the one described in ceremonial language. If promotions, budgets, prestige, and survival are tied to the wrong goals, the wrong goals become operational truth.

Stated values without aligned systems are not merely weak. They are camouflage.

Redesign Is the Only Rescue

If the goal is serious reform, the work begins where institutions usually least want to look: at the reward structure.

Redesign is the only rescue.

That redesign requires at least three moves.

1. Invert the Core Metrics

The path to institutional success must be the same path that produces the declared value.

If safety is the claim, safety must control promotion, budgets, timelines, and executive consequence.

If truth is the claim, truth must outrank engagement and speed.

If patient outcomes are the claim, those outcomes must overpower throughput pressure.

If integrity is the claim, integrity must be expensive to violate, not merely pleasant to mention.

Until the metric and the mission point in the same direction, the mission is decorative.

2. Raise the Cost of Drift

Distortion spreads when the people controlling the system are insulated from the harm it produces.

That insulation has to break.

If the penalties for misalignment fall mainly on frontline workers, users, patients, passengers, or the public, the system has every reason to preserve itself. Drift becomes normal because the wrong people bear the cost. A serious institution pushes consequence upward toward the people designing, funding, and governing the incentive structure.

3. Shorten the Feedback Loops

A system can hide from reality if reality arrives too late.

Crashes, fraud, overdoses, bias, error rates, and downstream harms must feed back into the objective function quickly enough to change behavior before distortion hardens into routine. If the feedback loop is measured in years, the institution can narrate over the damage faster than it can learn from it.

Delayed consequence is one of the great enablers of institutional self-deception.

The Real Test of a Value

A declared value is not real because leaders believe it, employees repeat it, or communications teams publish it.

A value becomes real only when the system makes it costly to violate and normal to uphold.

That is the test.

Not what the institution says.

Not what it intends.

Not what it believes about itself.

What does it reward?

What does it ignore?

What does it punish?

What does it make easy?

What does it force ordinary people to become in order to survive?

Those questions reveal the system. And the system reveals the future.

Until institutions are judged by their operating logic rather than their self-description, stated values will remain cheap language wrapped around expensive harm.

The wall will keep talking.

The machine will keep deciding.

Closing Note

This piece is part of a larger argument: that systems are behavioral training machines, and that modern institutional failure is best understood not as a mystery of bad intentions, but as the predictable output of misaligned design.

That is the shift that matters.

Stop asking what institutions say they value.

Start asking what their systems make inevitable.

Member discussion