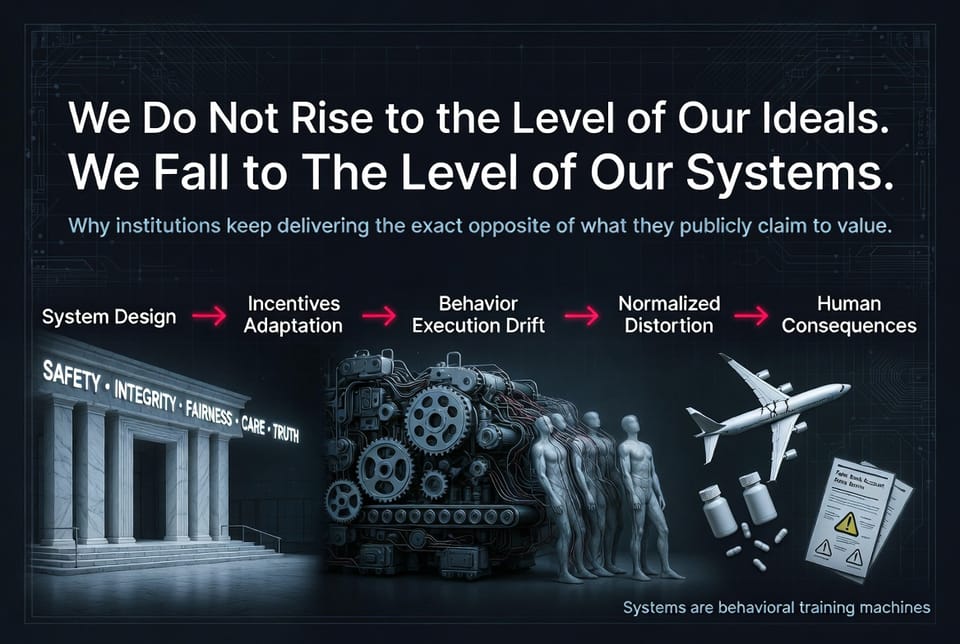

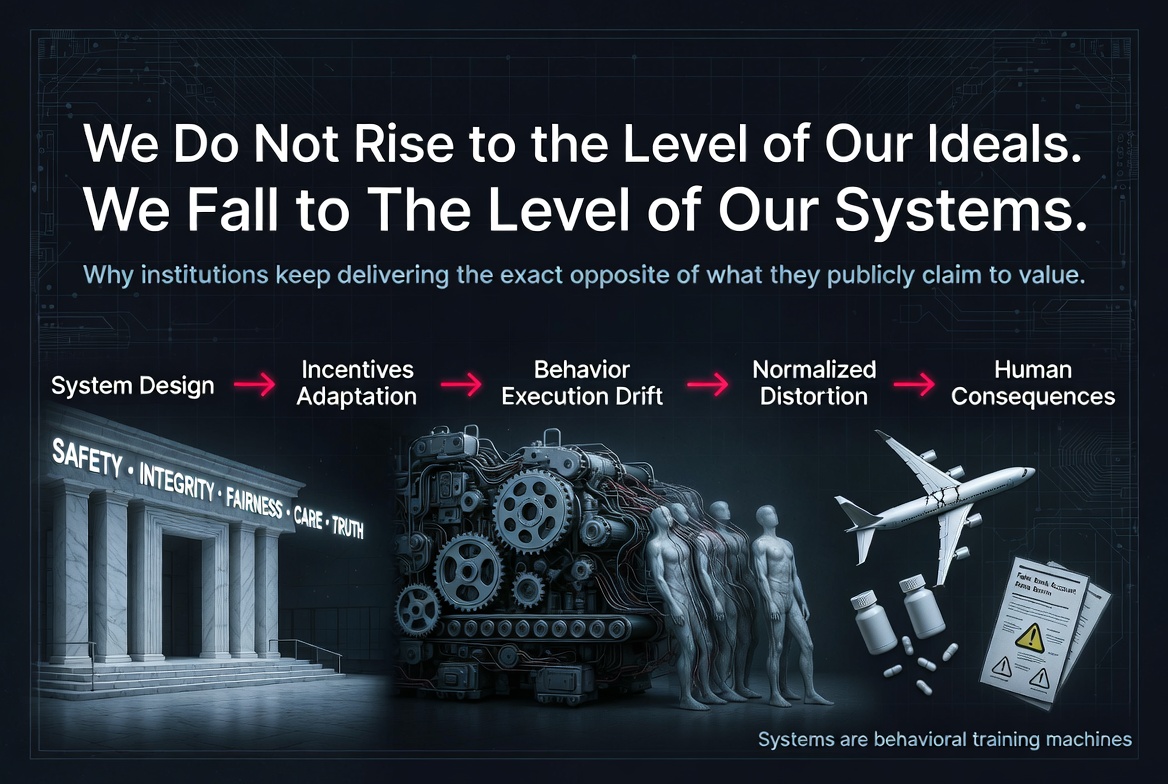

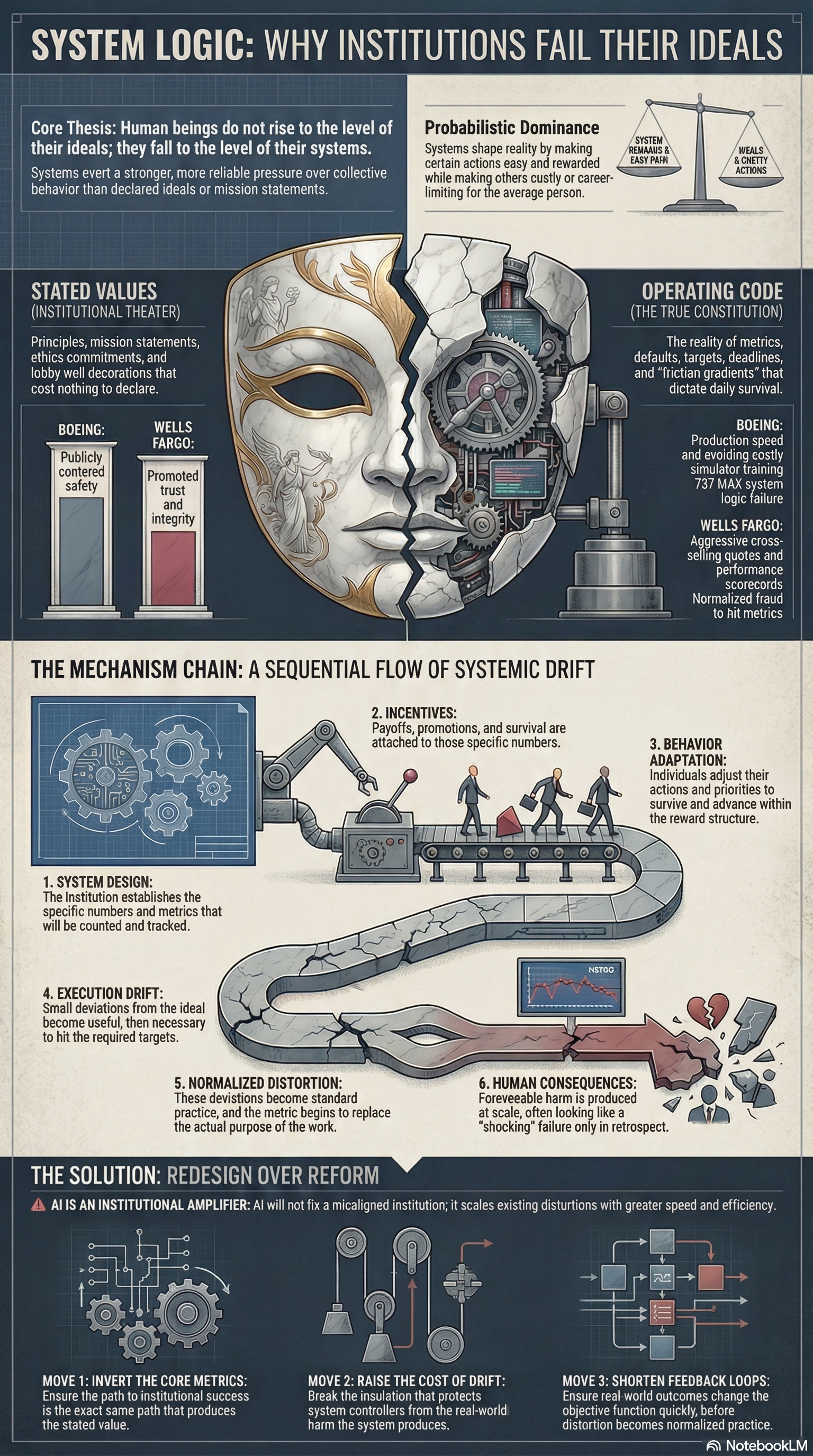

We Do Not Rise to the Level of Our Ideals. We Fall to the Level of Our Systems

Human beings do not rise to the level of their ideals. They fall to the level of their systems.

That is not poetry. It is mechanics.

Every institution announces the same virtues: safety, integrity, fairness, accountability, care, truth. Mission statements are framed. Speeches are rehearsed. The language is always available. Then the machine runs.

What actually shapes behavior is not the language. It is the wiring: what gets measured, what gets rewarded, what gets punished, what gets ignored, and what gets made frictionless.

Systems are behavioral training machines. What they reward becomes normal.

Institutional failure is rarely a story about bad people. It is a story about good people adapting rationally to a badly designed machine. The metric sets the payoff. The payoff shapes behavior. Behavior adapts to survive. Drift becomes policy. Distortion becomes normal. Harm becomes predictable.

This is why scandals are so often misdiagnosed as failure. In most cases the system did not fail. It succeeded at exactly what it was wired to do.

When Boeing publicly declared safety its highest priority while internally tying executive bonuses and certification deadlines to the 737 MAX delivery schedule, the two fatal crashes were not a betrayal of the system. They were its output. Internal warnings existed. Schedule pressure won.

When Purdue Pharma told the world it existed to relieve suffering while paying sales representatives bonuses based on prescription volume, the opioid crisis was not a moral accident. It was the foreseeable result of a compensation structure that made volume the number that mattered.

When Wells Fargo publicly celebrated customer relationships while tying branch-manager compensation and job security to aggressive sales quotas, the creation of millions of fake accounts was not rogue behavior. It was the culture translated into daily operations.

The gap between public claim and operational design is not hypocrisy. It is the system revealing its true objective function.

At scale, systems exert stronger and more reliable pressure on collective behavior than declared ideals. Agency still exists. Warnings are issued. Whistleblowers speak. Yet unless those warnings can rewrite incentives, they become documentation, not control.

This pattern repeats because reform almost never touches the wiring. After every scandal the ritual is identical: apology, review, reshuffle, ethics training, new slogans. Then the machine resumes, because the metric never changed.

The same logic now accelerates with AI.

AI does not fix broken systems. It scales their logic at machine speed. Feed it a distorted proxy, engagement over truth, speed over safety, volume over health, and it will optimize that proxy faster, cheaper, and with a false patina of neutrality. The mechanism is unchanged. Only the velocity increases.

The inverse is also true. When the objective function matches the public claim, when validation is external and uncontrollable, and when consequences flow back faster than drift can normalize, the same structural logic produces value with mechanical consistency.

The real diagnostic questions are never about stated values.

What is the system actually measuring?

What does survival inside it depend on?

Who chose the objective function, and what were their incentives?

How quickly do real-world consequences re-enter the machine?

Civilizations do not become humane because they announce humane values. They become humane when they build institutions whose reward structures make humane behavior the path of least resistance.

The system is not broken.

It is working exactly as designed.

That is the diagnosis.

That is also the only place the solution has ever lived.

Member discussion